GPU Infrastructure Procurement On-Demand for AI Startups

AI startups need GPU compute, but procurement is complex. Learn about on-demand GPU options, pricing models, and how to scale efficiently.

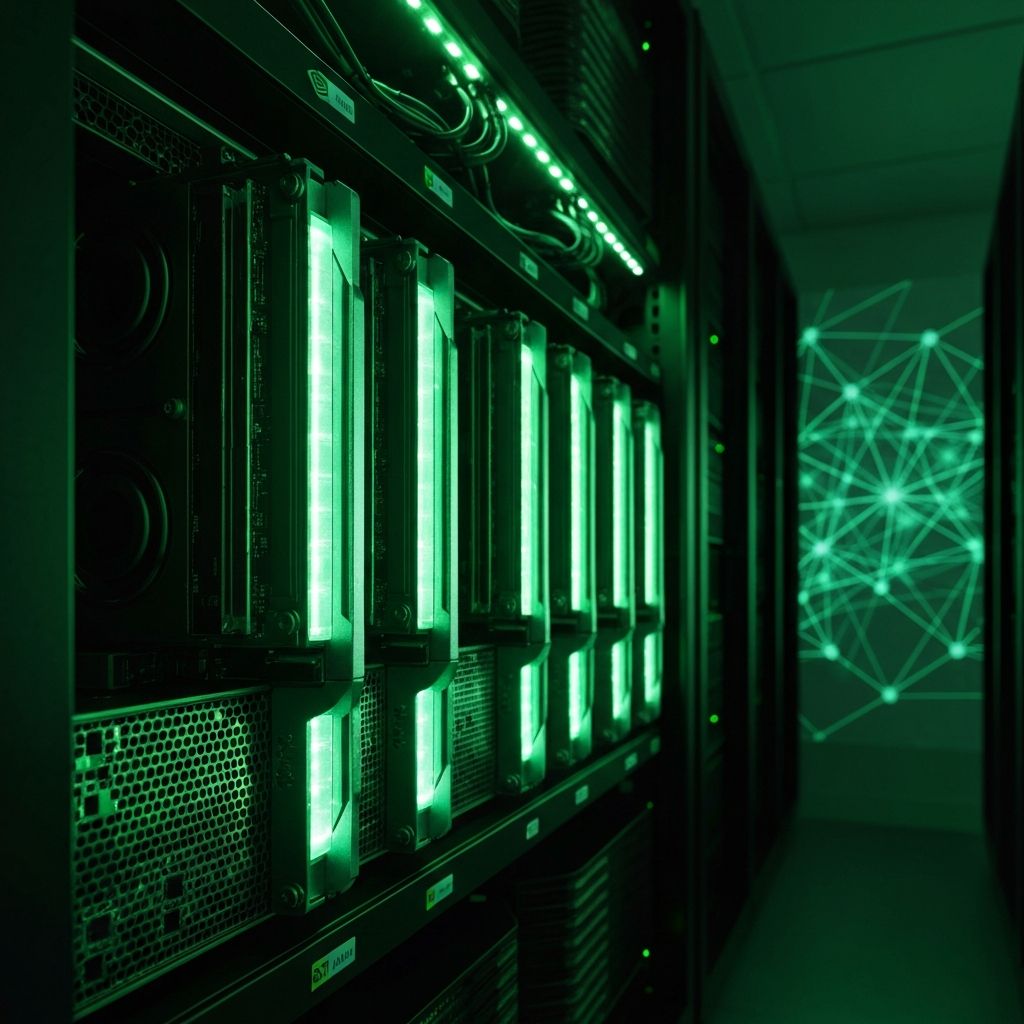

The GPU Challenge for AI Startups

Training machine learning models requires significant GPU compute. A single training run for a large language model can cost $100,000+ in cloud GPU hours. For AI startups in Bangalore and across India, GPU procurement is both a technical and financial challenge.

Understanding Your GPU Options

1. Cloud GPU (Pay-as-you-go)

Providers: AWS, Google Cloud, Azure, Lambda Labs, CoreWeave

Pros:

- No upfront investment

- Scale up and down instantly

- Access to latest hardware (H100, A100)

Cons:

- Expensive at scale

- Availability constraints during high demand

- Data transfer costs add up

Best for: Experimentation, burst training, variable workloads

2. Reserved GPU Instances

Commit to 1-3 years for 30-60% discounts vs. on-demand pricing.

Pros:

- Predictable costs

- Guaranteed capacity

- Significant savings at scale

Cons:

- Long-term commitment

- Capacity planning required

- Hardware becomes outdated

Best for: Steady-state training workloads, production inference

3. Private GPU Infrastructure

Own or lease dedicated GPU servers in colocation or on-premise.

Pros:

- Lowest long-term cost

- Full hardware control

- Data sovereignty

- No egress fees

Cons:

- High upfront investment

- Maintenance overhead

- Slower to scale

Best for: Large-scale training, sensitive data, long-term AI roadmaps

GPU Hardware Comparison

| GPU Model | VRAM | FP16 Performance | Best For |

|---|---|---|---|

| NVIDIA H100 | 80GB | 1,979 TFLOPS | Large model training |

| NVIDIA A100 | 40/80GB | 312 TFLOPS | General ML workloads |

| NVIDIA L40S | 48GB | 362 TFLOPS | Inference, fine-tuning |

| NVIDIA A10G | 24GB | 125 TFLOPS | Inference, small training |

Procurement Strategies for Indian Startups

Start with Cloud, Plan for Private

Most AI startups should begin with cloud GPUs for flexibility. As workloads stabilize and costs become predictable, transitioning to reserved or private infrastructure makes sense.

Consider Indian Data Centers

Data localization requirements and latency considerations may favor Indian data centers. Providers like Yotta, CtrlS, and NTT offer colocation with GPU hosting capabilities.

Hybrid Approaches

Many startups use a mix:

- Cloud GPUs for experimentation and burst training

- Reserved instances for production inference

- Private clusters for sensitive data or sustained training

Cost Optimization Tips

- **Use spot/preemptible instances** — Up to 90% savings for fault-tolerant workloads

- **Right-size your instances** — Monitor GPU utilization; many teams over-provision

- **Schedule training jobs** — Run large jobs during off-peak hours for better availability

- **Optimize your code** — Mixed precision training can halve memory requirements

Working with a Procurement Partner

GPU procurement involves navigating availability constraints, import logistics (for hardware), and complex pricing models. Tekfin helps AI startups:

- Access GPU capacity from multiple providers

- Negotiate reserved instance pricing

- Procure and deploy private GPU clusters

- Manage hybrid infrastructure

Integration with Your Stack

GPU infrastructure doesn't exist in isolation. Ensure your software licensing covers ML frameworks and tools, and that your general IT procurement supports data engineering workflows.

Getting Started

Not sure which GPU strategy fits your startup? Connect with Tekfin for a free consultation on AI infrastructure procurement.